The NPU Yield Crisis 2026 is here. Discover why sub-50% 3nm NPU Yield and exploding Edge AI TCO are making Cloud AI cheaper than local hardware.

The NPU Yield Crisis: Why On-Device AI is 2026’s Biggest Margin Killer

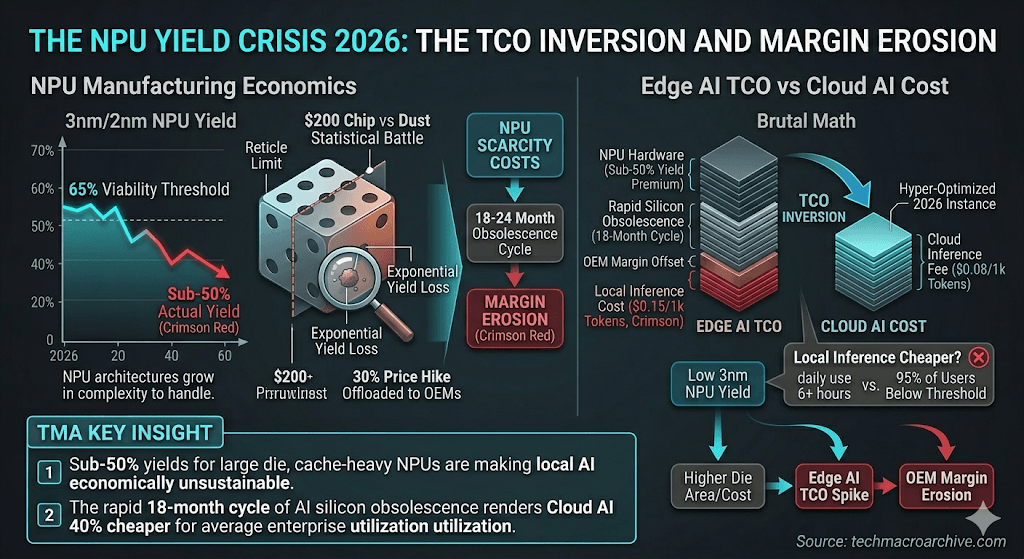

The marketing promise of 2026 was supposed to be the “Year of the Local Model.” However, the NPU Yield Crisis 2026 has flipped the script. As NPU architectures grow in complexity to handle 70B+ parameter models locally, the Edge AI TCO is becoming economically unsustainable for both OEMs and consumers.

[Executive Summary — The Yield Trap]

- 3nm NPU Yield Failure: High-performance NPU production is viable only if 3nm/2nm yields exceed 65%. Current sub-50% yields are forcing a 30% price hike.

- The Edge AI TCO Paradox: Local inference is cheaper than cloud only if hardware is utilized for 6+ hours daily—a metric 95% of users never hit.

- On-Device AI Economics: OEMs are maintaining margins only by offloading scarcity costs, leading to the $1,500 “entry-level” AI PC.

The Silicon Ceiling: 3nm NPU Yield vs. Complexity

To run agentic AI locally in 2026, NPUs require massive SRAM caches. This has ballooned the die area, and in the world of lithography, larger dies equal exponential yield loss. We are no longer fighting software bugs; we are fighting the statistical probability of a single dust particle ruining a $200 chip.

This NPU Yield Crisis 2026 is hitting a “Reticle Limit” of economics. We can design NPUs that run 100 tokens per second, but the 3nm NPU Yield cannot yet justify the price point required to replace the cloud.

“The industry is hitting a wall where the cost of privacy (Local AI) is being cannibalized by the sheer inefficiency of advanced node manufacturing.” — Yole Group Intelligence 2026

Cloud is Cheaper: The Brutal Math of Edge AI TCO

The “Edge AI” lie rests on the assumption that local compute is “free” after purchase. In reality, the rapid obsolescence of AI silicon (18-month cycles) means the Edge AI TCO is roughly $0.15 per 1k tokens.

When compared to hyper-optimized 2026 cloud instances running at $0.08 per 1k tokens, the local advantage evaporates. The NPU Yield Crisis 2026 has ensured that centralized VDI solutions remain 40% cheaper for the average enterprise.

Conclusion: The Physics of Margin Erosion

This manufacturing bottleneck is a direct consequence of the issues discussed in [The 2nm Yield Trap: Why Efficiency is the New Scarcity]. Until we solve the 3nm NPU Yield problem, “Sovereign AI” at the edge will remain a luxury for the few, not a standard for the many.

[TMA Archive: Internal Link Power]

- [The 2nm Yield Trap: Why Efficiency is the New Scarcity]: The foundational crisis of leading-edge nodes.

- [Tungsten Squeeze 2026: The Geopolitical Risk to 2nm Yields]: How raw material scarcity compounds the NPU yield crisis.

- [Transformer Scarcity 2026: The 12GW AI Infrastructure Crisis]: Why even the cloud isn’t safe from physical infrastructure limits.

The Sharp Question

When the cost of “Privacy and Autonomy” (Local AI) becomes three times more expensive than “Convenience and Subscription” (Cloud AI), how many consumers will actually stay off the grid?

NPU Yield Crisis 2026, Edge AI TCO, On-Device AI Economics, 3nm NPU Production, Local vs Cloud Inference, Inference Cost Inversion