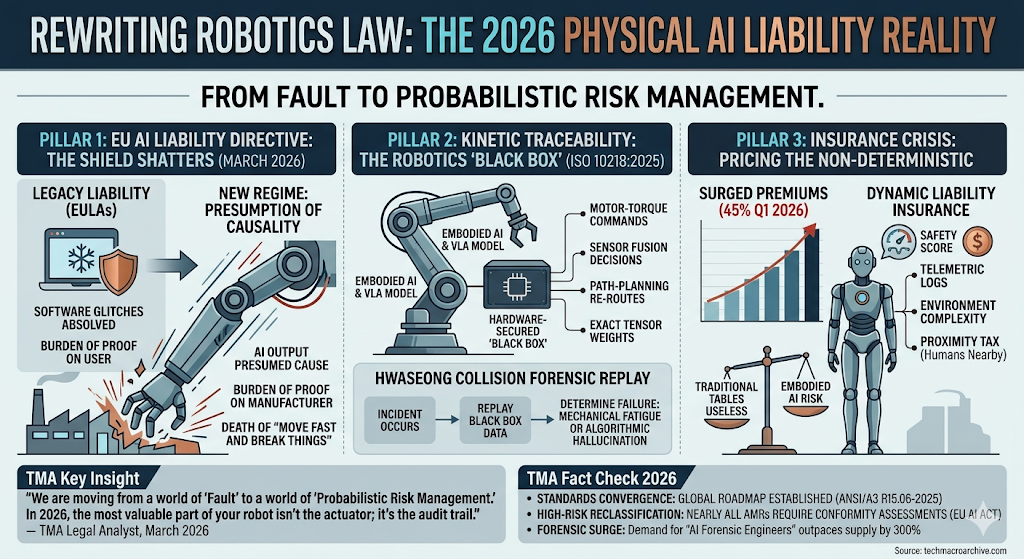

In March 2026, the legal framework for robotics is being rewritten. From the EU’s “Presumption of Causality” to new ISO safety standards, explore the new reality of Physical AI liability.

The End of “Software as a Service” Liability

For decades, software developers hid behind End User License Agreements (EULAs) that absolved them of almost all responsibility for “glitches.” But in March 2026, that shield is shattering. When a Vision-Language-Action (VLA) model governs a 150kg humanoid robot, a “glitch” isn’t a frozen screen—it’s a crushed limb or a destroyed factory line.

The regulatory hammer is falling in the form of the EU AI Liability Directive. Its most radical provision is the “Presumption of Causality.” Under this new regime, if an autonomous system causes harm, the court presumes the AI’s output was the cause. The burden of proof shifts entirely to the manufacturer. You must prove your neural network followed every safety protocol, or you pay. This is the death of “move fast and break things” in the physical world.

Kinetic Traceability: The Robotics “Black Box”

The technical response to this legal pressure is the rapid adoption of ISO 10218:2025. These aren’t just suggestions; they are the new gatekeepers of market access. The core requirement for 2026 is Kinetic Traceability.

Every motor-torque command, every sensor fusion decision, and every path-planning re-route must now be logged in a hardware-secured “Black Box.” When an incident occurs—like the widely reported Hwaseong factory collision last month—forensic teams no longer guess what the AI was “thinking.” They replay the exact tensor weights and environmental inputs to determine if the failure was a mechanical fatigue or an algorithmic hallucination.

The Insurance Crisis: Pricing the Non-Deterministic

The real friction of 2026 is happening in the insurance boardrooms. Underwriters are reeling. Traditional actuarial tables are useless against “Embodied AI” that learns and evolves its behavior in real-time.

As a result, premiums for robotics deployments have surged by 45% this quarter. We are seeing the rise of “Dynamic Liability Insurance,” where premiums are adjusted in real-time based on the robot’s “Safety Score”—a metric derived from its latest telemetric logs and the complexity of the unstructured environment it inhabits. If your robot is working near humans, you pay the “Proximity Tax.”

“We are moving from a world of ‘Fault’ to a world of ‘Probabilistic Risk Management.’ In 2026, the most valuable part of your robot isn’t the actuator; it’s the audit trail.” — TMA Legal Analyst, March 2026.

TMA Fact Check 2026

- The Standards Convergence: The publication of ANSI/A3 R15.06-2025 in January has finally harmonized US and International safety standards, creating a single “Global Compliance Roadmap” for humanoid manufacturers.

- The High-Risk Reclassification: Under the EU AI Act, nearly all autonomous mobile robots (AMRs) operating in public or collaborative spaces are now classified as “High-Risk,” requiring third-party conformity assessments before deployment.

- The Forensic Surge: Demand for “AI Forensic Engineers”—specialists who can deconstruct VLA failure states for court proceedings—has outpaced supply by 300% this year, making it the most lucrative niche in the 2026 labor market.

Related Deep Analysis

- [The Physical AI Breakout: Why 2026 is the Year Robotics Learned to “Feel”]

- [The Silicon Iron Curtain: Big Tech’s Brutal Collision with the EU AI Act]

- [The Convergence of Physical AI and Friend-shoring 2.0: Rewiring the Global Factory]

The Sharp Question

As we move toward a world where the law presumes the AI is guilty until proven innocent, will the cost of “Absolute Safety” stifle the very innovation that Friend-shoring 2.0 requires—or are we simply finally treating silicon-based labor with the same gravity we afford to the carbon-based variety?

#Physical AI #Robotics Liability #EU AI Act #ISO 10218:2025 #AI Safety 2026 #Tech Macro