Discover why NPU Architecture Efficiency is the most critical metric for 2026 AI deployments, balancing power consumption with high-speed inference.

NPU Architecture Efficiency: The Decisive Metric for 2026 AI Infrastructure

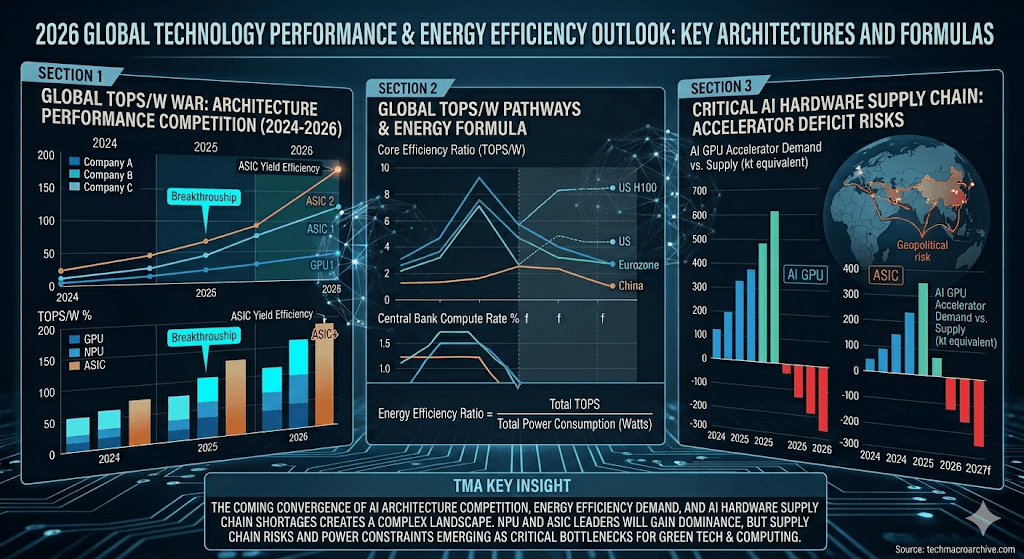

NPU Architecture Efficiency has emerged as the primary bottleneck and opportunity for enterprises scaling AI in 2026, shifting the focus from raw power to sustainable performance. As global energy costs rise and the demand for real-time inference at the edge peaks, understanding the structural advantages of Neural Processing Units is no longer optional for CTOs.

Executive Summary: The Shift to Efficiency-First AI

- 1. Energy Dominance: 2026 NPU designs prioritize low-leakage logic over brute-force clock speeds to maximize battery life in mobile and edge devices.

- 2. Data Bottleneck Resolution: Integrated memory architectures (In-Memory Computing) are reducing the latency and power cost of data movement by up to 40%.

- 3. Scalability ROI: Efficiency-driven NPU clusters offer a 3x better Return on Investment over three years compared to traditional general-purpose GPU setups.

| Architecture Type | Energy Efficiency (TOPS/W) | Latency (ms) | Scalability Index |

| Traditional GPU | 2.5 – 4.0 | High (Batch dependent) | Moderate |

| First-Gen NPU | 8.0 – 12.0 | Medium | High |

| 2026 Next-Gen NPU | 25.0 – 45.0 | Ultra-Low | Maximum |

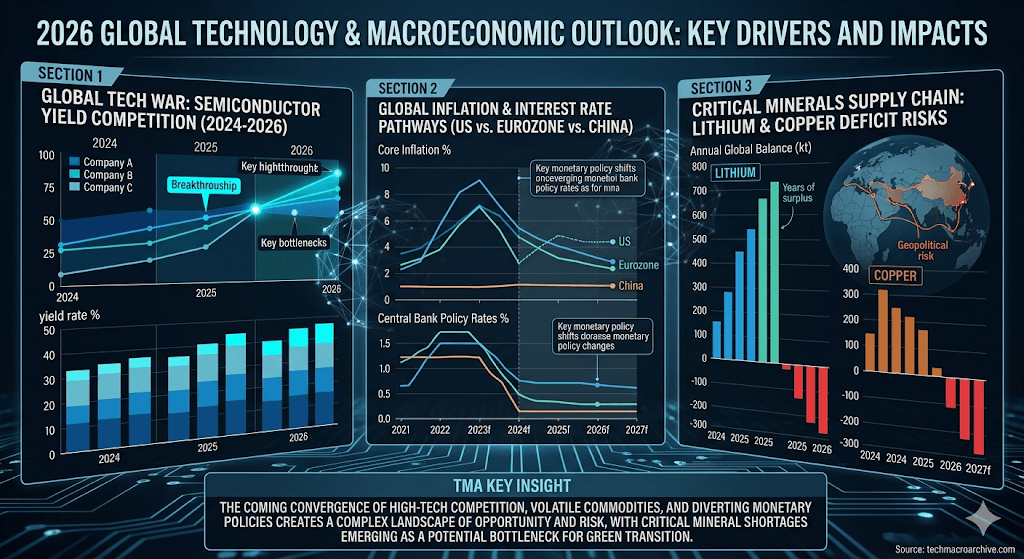

Market & Economic Friction

The global shift toward [Sustainable AI Infrastructure] has created a friction point where legacy data centers are struggling to keep up with the thermal demands of modern LLMs. By adopting high NPU Architecture Efficiency, firms can bypass the massive cooling overheads that currently consume nearly 30% of operational budgets. The economic imperative is clear: efficiency is the only path to profitable AI scaling.

Technical Deep-Dive & ROI Analysis

The secret behind the 2026 efficiency leap lies in “Sparse Computing” and “Dynamic Precision.” Unlike GPUs that process every zero in a tensor, modern NPUs ignore redundant data, focusing only on active neurons. This architectural choice directly influences [Real-time Inference ROI], as it allows for smaller, cheaper power supplies and longer hardware lifecycles.

“The true measure of AI progress in 2026 isn’t how many parameters we can train, but how many tokens we can generate per milliwatt.” — By TMA

2026 Investment Roadmap & Risk Factors

When auditing NPU vendors this year, investors must look beyond peak TOPS (Tera Operations Per Second). The risk lies in “Dark Silicon”—parts of the chip that remain unused due to thermal constraints. A high-efficiency architecture ensures that 90% of the silicon is active during peak loads, minimizing wasted capital. Priority should be given to architectures supporting INT4 and FP8 hybrid precision.

Conclusion: The Era of “Cold AI”

The race for computational supremacy has ended; the race for computational elegance has begun. In 2026, NPU Architecture Efficiency is the silent engine driving the “Cold AI” revolution—where powerful intelligence operates silently, coolly, and profitably. Those who fail to optimize their silicon strategy today will find their margins evaporated by the heat of their own servers tomorrow.

Related Tech Insights:

- [NPU Yield Crisis 2026: Why Edge AI is Killing Margins]

- [The 1GW Power Wall: Why AI’s TCO is Exploding in 2026]

- [The On-Device AI Mirage: Why Your 2026 PC Is a Local LLM Prison]

Sharp Question:

Is your current infrastructure generating intelligence, or is it just generating heat?

NPU Architecture Efficiency, AI Hardware 2026, Neural Processing Unit, Energy Efficient AI, Inference Optimization,