Neuromorphic Edge Economics: Redefining AI ROI Beyond the 1GW Power Wall

Introduction: The Thermodynamic Limit of Intelligence

In May 2026, the AI industry has officially collided with the “1GW Power Wall.” The unsustainable trajectory of cloud-based LLM scaling has transformed massive data centers from strategic assets into fiscal liabilities. As energy costs and cooling overheads begin to cannibalize corporate margins, the focus has shifted from “How large is the model?” to “How many milliwatts per decision?”

Welcome to the era of Neuromorphic Edge Economics.

Executive Summary: The Efficiency Pivot

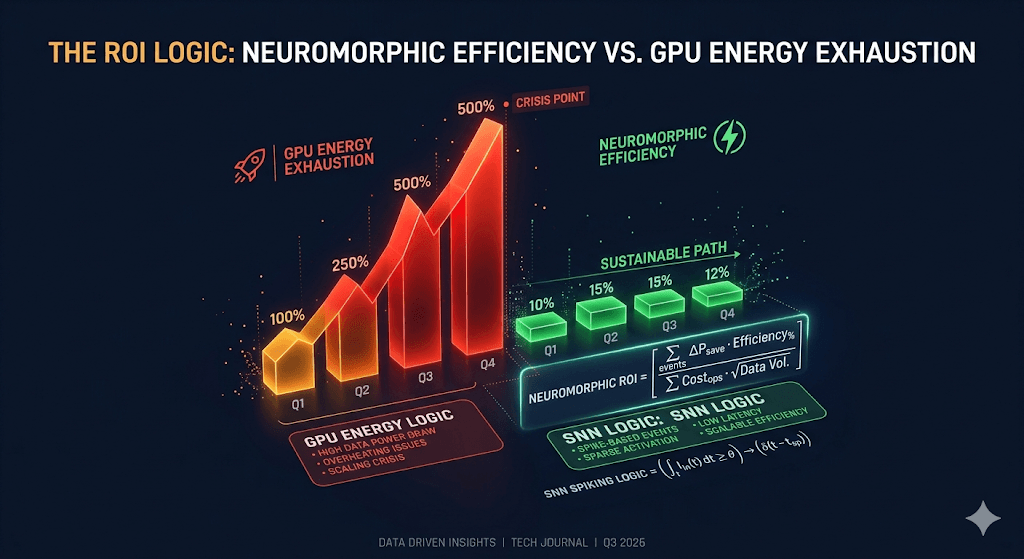

Neuromorphic Edge Economics represents a fundamental shift in infrastructure, prioritizing biological energy efficiency to overcome the diminishing returns of traditional silicon scaling. By mimicking the human brain’s event-driven processing, enterprises are now decoupling AI growth from electrical utility costs.

| Metric | Legacy GPU/NPU Edge | Neuromorphic (SNN) | Economic Impact |

| Energy Consumption | High (Continuous) | Ultra-Low (Event-driven) | 90% OpEx Reduction |

| Inferences Per Watt (IPW) | Low Base | Frontier Grade | Efficiency Alpha |

| Latent Response | 15ms – 100ms | < 1ms (On-chip) | Real-time Safety |

| Hardware Lifecycle | 2-3 Years (Heat wear) | 5+ Years (Cool running) | Lower CapEx Replacement |

Technical Deep-Dive: Smashing the Memory Wall

Traditional Von Neumann architectures suffer from the “Memory Wall,” where moving data between the processor and RAM consumes 90% of total system energy. Neuromorphic chips integrate memory and processing into a singular “In-Memory” compute unit.

Just as the human brain operates on a mere 20 Watts, Spiking Neural Networks (SNN) fire only when a data event occurs, eliminating wasted idle power. This is not just a hardware upgrade; it is the inevitable economic conclusion to the unsustainable power trajectories of 2024-2025.

2026 Investment Roadmap: The Rise of Vertical Intelligence

In the 2026 landscape, the winning metric is no longer TFLOPS, but IPW (Inferences Per Watt).

- Short-term: Retrofitting industrial IoT sensors with neuromorphic “ears” and “eyes” to slash monitoring costs.

- Mid-term: Full integration into Level-4 autonomous vehicles to extend battery range by 15% through localized, low-power neural centers.

Risk Factors: The primary barrier remains the “Programming Gap”—the lack of standardized SNN software frameworks compared to legacy Python-based AI environments.

Conclusion: The Death of the “Cloud-First” Mandate

The era of sending every byte of data to a central server is ending. Neuromorphic Edge Economics proves that intelligence is most valuable when it is local, lean, and lightning-fast. Those who fail to adapt to the brain-inspired efficiency model will find their margins consumed by their own electricity bills.

Related Tech Insights:

- AI ROI Reality Check 2026: Big Tech’s Brutal CAPEX Wall

- NPU Yield Crisis 2026: Why Edge AI is Killing Margins

- The HBM4 Memory Fortress: Why 2026 is the Year of the Vertical Super-Cycle

Sharp Question:

Is your AI strategy built on the unsustainable power of the past, or the biological efficiency of the future?

Neuromorphic Computing, Edge AI, SNN, AI ROI, Energy Efficiency