Post-Nvidia Era: Is the AI Chip Bubble Finally Bursting?

The era of mindless Nvidia maximalism is dying. In 2026, if you aren’t looking at the “Efficiency Shift,” you’re betting on a sinking ship.

Executive Summary

- The Dominance Trap: Nvidia’s 70%+ gross margins are becoming “Big Tech’s Tax.” Cloud giants are no longer just buying chips; they are building their own to reclaim profit.

- The ROI Crisis: As AI moves from training to massive-scale Inference, the $40,000 general-purpose GPU is being replaced by $5,000 specialized ASICs that run 10x more efficiently.

- The 2026 Pivot: The smart money is rotating from “Raw Compute” (Nvidia) to “Intelligence per Watt” (Custom Silicon & Power Infrastructure).

Expectation vs. Reality (2026 Market)

| Factor | Expectation (The Hype) | Reality (2026 Shift) |

| Profit | Unlimited GPU Demand | Diminishing Returns on General Training |

| Moat | CUDA is Unbeatable | Open-source (Triton/MLIR) has broken the lock-in |

| Sustainability | Infinite Data Center Growth | Power Grid Constraints are the new hard ceiling |

| Main Driver | LLM Training | Real-time Edge & Sovereign AI Inference |

Market Reality: The “CUDA Moat” is Evaporating

For two decades, Nvidia’s software ecosystem, CUDA, was the golden cage. However, in 2026, the industry has successfully revolted. The rise of hardware-agnostic compilers like OpenAI’s Triton and PyTorch 3.0 has made switching hardware as simple as changing a line of code.

The fear of “Nvidia-dependency” has forced Google (TPU v7), Amazon (Trainium 3), and Microsoft (Maia 200) to move 60% of their internal workloads to custom silicon. When your biggest customers become your most aggressive competitors, the “Blue Ocean” turns into a bloodbath. Relying on Nvidia as your sole AI proxy is no longer a strategy—it’s a lack of imagination.

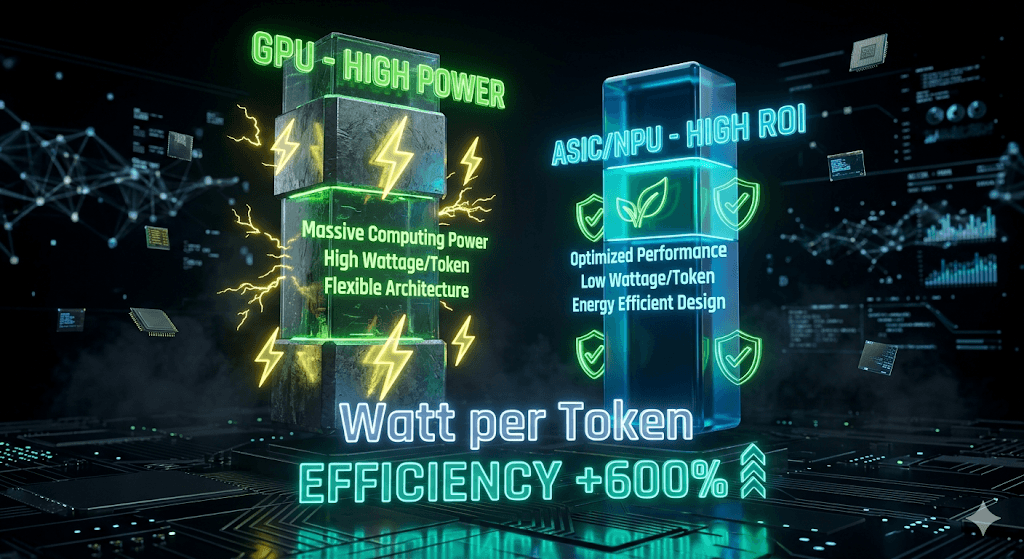

ROI Analysis: Why “Generic” is Losing to “Specific”

General-purpose GPUs are power-hungry monsters. In a world where electricity costs and carbon taxes dictate the bottom line, the ROI on a chip that wastes 60% of its energy on unused features is plummeting.

The capital is flowing toward ASICs (Application-Specific Integrated Circuits). These chips are laser-focused on Inference—the stage where AI actually generates revenue.

- Efficiency: ASICs offer up to 65% lower cost-per-token compared to Blackwell-class GPUs.

- Scalability: While Nvidia struggles with supply chains, companies like Broadcom and Marvell are enabling a decentralized explosion of custom AI silicon.

“The bottleneck is no longer compute power; it is the cost of the electron. Whoever delivers the most intelligence per watt wins the 2026 hardware war.” — by TMA

2026 Strategy & Risk: Is it too late to pivot?

The “Bubble” isn’t AI itself—it’s the overvaluation of raw, inefficient compute.

- The Risk: If you are holding legacy GPU stocks while the world moves to AI PCs and NPU-integrated devices, you are holding the “Intel of the AI era.”

- The Strategy: Diversify into the Energy Infrastructure (Nuclear/SMRs) that powers these chips and the specialized NPU designers who are cannibalizing Nvidia’s mid-range market.

Conclusion: The Cannibalization Has Begun

Stop waiting for a “crash”—watch the transition. The capital is leaking out of centralized, expensive data centers and flowing into specialized, efficient edge hardware. Nvidia isn’t going to zero, but its days of 1,000% growth are over.

The smart money has already moved to the “Nvidia Killers” lurking in the ASIC and NPU sectors. Move your capital, or watch it evaporate in the heat of an obsolete GPU cluster.

Sharp Question:

Is your portfolio built on the technology of 2023, or the efficiency requirements of 2027?

Nvidia Bubble, AI Chips, NPU vs GPU, ASIC Investment, Semiconductor Trends 2026, Tech Macro Archive