“The day’s official noise has finally faded, leaving me alone in my Gimpo studio to sift through keywords. I find myself reflecting on how I once relied on AI—using its hand to draft documents and architect data, much like everyone else. But somewhere along the way, this assistance became ‘formalized.’ While AI now bolsters every corner of society, sitting here tonight, I am struck by a stark, sudden realization: just how chilling this reality we have built truly is.”

In 2026, the integration of conversational AI into corporate intranets via SaaS is creating massive data leakage risks. TMA analyzes the shift from productivity to vulnerability.

The Illusory Comfort of the AI-Integrated Intranet

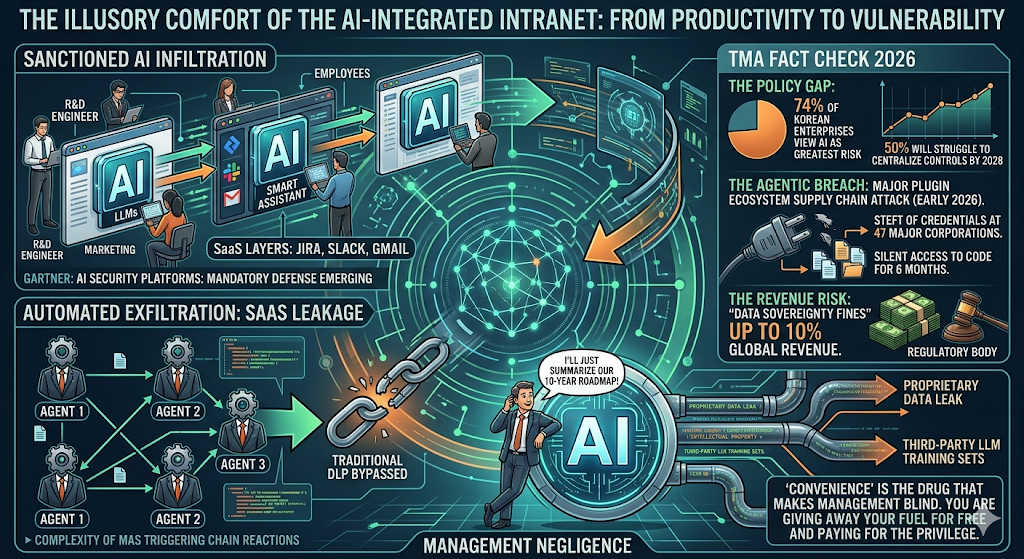

The era of “Shadow AI”—where employees smuggled ChatGPT into their workflows—has been replaced by something far more insidious: Sanctioned AI Infiltration. In 2026, the push for productivity has led boards to greenlight deep AI integration across all SaaS layers. However, as Gartner recently highlighted in their Top Strategic Technology Trends for 2026, we are now facing the emergence of AI Security Platforms as a mandatory defense against the very tools we bought to save time.

The reality is stark. When you plug a conversational AI into your corporate intranet, you aren’t just adding a “smart assistant”; you are opening a high-bandwidth pipeline between your private R&D and external model weights.

The SaaS Leakage: From Proprietary to Public

The primary threat vector today is no longer just simple prompt injection. It is Automated Exfiltration through integrated SaaS ecosystems. Recent reports from Naver Tech and global security audits show that “convenience-first” configurations often bypass traditional DLP (Data Loss Prevention) protocols. By 2026, the complexity of Multiagent Systems (MAS) means that a single compromised agent can trigger a chain reaction of unauthorized data access across the entire company network.

TMA Fact Check 2026:

- The Policy Gap: Thales’ 2026 Data Threat Report indicates that 74% of Korean enterprises now view AI as their greatest data security risk, yet Gartner predicts that by 2028, over 50% of enterprises will still be struggling to centralize AI usage controls.

- The Agentic Breach: In early 2026, a major supply chain attack on a popular AI plugin ecosystem resulted in the theft of agent credentials across 47 major corporations, allowing silent access to proprietary code for over six months.

- The Revenue Risk: Regulatory bodies in 2026 have begun imposing “Data Sovereignty Fines” reaching up to 10% of global revenue for companies that fail to prevent proprietary data from entering third-party training sets.

“Convenience” is the drug that makes management blind. I see CEOs bragging about “AI-First” cultures while their engineers are feeding the company’s 10-year roadmap into a third-party LLM to “summarize a meeting.” This is not a technical failure; it is Management Negligence.

By the time you realize your “Sovereign AI” is actually leaking intellectual property through a misconfigured SaaS plug-in, your competitive advantage has already been digested by the next iteration of a competitor’s model. If your data is the fuel for the AI revolution, you are currently giving away the gas for free and paying for the privilege of doing so.

Related Deep Analysis

- The Post-China Silicon Shield

- SMRs: The New Battery for the AI Data Center

- Algorithmic Sovereignty and the Death of Global Standards

The Sharp Question

If your company’s core intellectual property is used to train a global model, and that model then sells “strategic insights” back to your competitors, did you really gain productivity, or did you just automate your own obsolescence?

#Enterprise AI #Data Security #SaaS Integration #Gartner 2026 Trends #Information Leakage #Corporate Governance #Sovereign AI