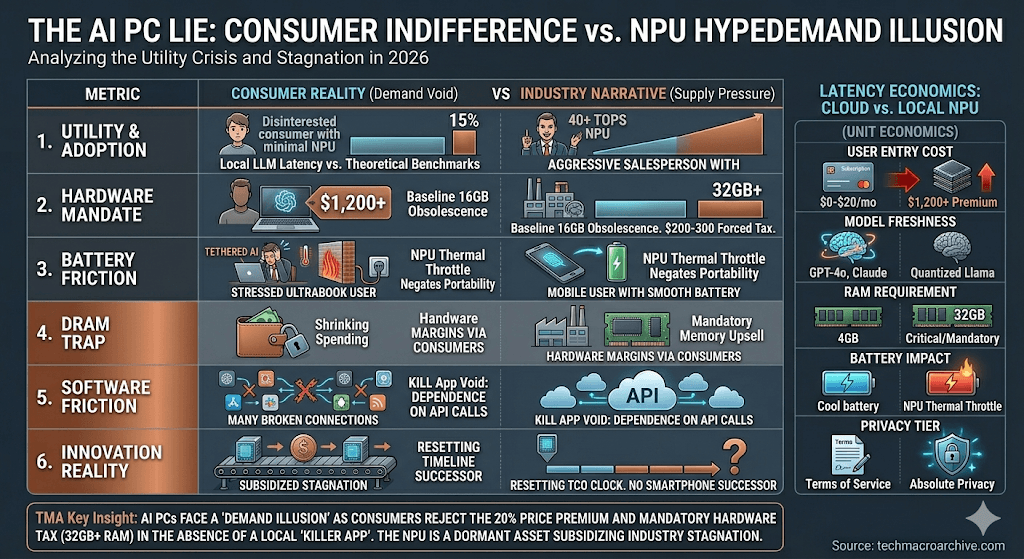

AI PCs promise a productivity revolution, but the market faces a “Demand Illusion.” Explore the cold reality of the NPU killer app void, the 16GB DRAM trap, and the subsidy of stagnation.

The semiconductor industry is facing a significant AI PC Demand Illusion, where OEM hype clashes with cold retail reality, yet the retail sector reports a chilling indifference. While OEMs tout NPU (Neural Processing Unit) benchmarks, the actual utility of 40 TOPS remains a theoretical ghost. Software developers struggle to find a “Killer App” that justifies the 20% price premium on next-gen silicon, leaving consumers wondering if they are buying a tool or an expensive experiment.The Yield War in Edge Computing: Why Efficiency is the New Silicon Gold (2026)

[Executive Summary — Cold Truths]

- Utility Crisis: The AI PC remains a luxury novelty until local LLM latency provides a measurable ROI over cloud-based inference for the average enterprise user.

- The DRAM Trap: Hardware margins are under threat as AI requirements force a baseline of 32GB RAM, shifting the cost burden to the consumer without a corresponding leap in UX. The $720 Billion Gamble: Big Tech’s AI Capex and the Brutal Math of Profitability

- Battery Friction: High-performance NPU tasks create a thermal wall that negates the portability benefits of modern ultrabooks, leading to a “Tethered AI” reality.

The 16GB Obsolescence: The Hidden Hardware Tax

The industry is quietly redefining the “Standard PC.” By mandating high TOPS (Trillions of Operations Per Second) for Windows “Copilot+” features, Microsoft and OEMs have effectively turned 16GB RAM machines into legacy hardware overnight.

This isn’t just a spec bump; it’s a forced obsolescence cycle. For the consumer, the “AI PC” isn’t a gift of intelligence, but a mandatory $200-300 tax to maintain basic OS fluidity in a post-LLM environment. Manufacturers are not selling capabilities; they are selling a ticket to stay relevant in an increasingly bloated software ecosystem.

AI PC Demand Illusion: Latency Economics and Costs

The market narrative assumes users crave privacy and speed, yet Latency Economics suggests otherwise. Under current conditions, the cost of specialized NPU hardware outweighs the marginal speed gains of local processing for most mundane tasks .[Edge AI & The Death of SaaS: The Great Migration to Local Compute]

[Unit Economics: Cloud vs. Local NPU]

| Metric | Cloud-Based AI (SaaS) | Local AI PC (NPU) |

|---|---|---|

| User Entry Cost | $0 – $20/mo (Subscription) | $1,200+ (Hardware Premium) |

| Model Freshness | Real-time (GPT-4o, Claude 3.5) | Static / Quantized (Local Llama) |

| RAM Requirement | Browser-level (4GB enough) | Critical (32GB+ Mandatory) |

| Battery Impact | Negligible (Server-side) | High (NPU Thermal Throttle) |

| Privacy Tier | Terms of Service dependent | Absolute (The Only Real Value) |

This Demand Illusion is fueled by supply-side pressure. Manufacturers are pushing AI PCs not because the technology is ready, but because the traditional laptop replacement cycle has hit a stagnation point. The “AI Ready” sticker is a desperate attempt to reset the TCO (Total Cost of Ownership) clock and justify higher ASPs (Average Selling Prices) in a saturated market.

The Killer App Void and Silicon Stagnation

Until we see a fundamental shift in OS-level integration, the NPU is a dormant asset.

- Software Friction: Most AI tools still rely on API calls to hyperscalers, rendering local hardware redundant for those with stable internet.

- Memory Bottlenecks: The DRAM Supercycle is a misnomer; it’s a mandatory tax on hardware that shrinks consumer discretionary spending on other tech sectors.

[The Evidence — Upgraded]

- Gartner Market Analysis — AI PC shipments are projected to grow, but consumer sentiment surveys show only 15% are willing to pay a premium for NPU features.

- IDC Device Tracker — Average Selling Price (ASP) for laptops is rising faster than inflation, threatening total sales volume growth in 2026.

[The Sharp Question]

If the most sophisticated “AI” task your $1,500 NPU performs is blurring a webcam background during a legacy Zoom call, have you purchased a ticket to the future—or have you simply subsidized the semiconductor industry’s struggle to find a successor to the smartphone?

Demand Illusion, DRAM Supercycle, Hardware Replacement Cycle, Average Selling Price (ASP), Consumer Discretionary Spending, TCO for Enterprise, Inventory Overhang,Latency Economics, Killer App Void, Local Inference Cost, DRAM Expansion Tax, NPU vs GPU Efficiency, Edge Compute ROI, Thermal Throttling