The biting March chill from the Han River still clings to my Gimpo studio this morning, a stark reminder that even in the age of “Blackwell,” physical cooling remains the final arbiter of power. I’m staring at the HBM3E supply charts on my monitor, the blue light reflecting off my cold espresso. It’s clear: Nvidia’s Blackwell architecture is no longer just a chip—it’s a gravitational well swallowing the entire memory industry.

An in-depth analysis of NVIDIA Blackwell’s impact on the HBM3E market in 2026. Explore the “Memory Supercycle” and the supply chain dominance of SK Hynix and Micron.

The HBM3E Golden Standard: Sold Out Through 2026

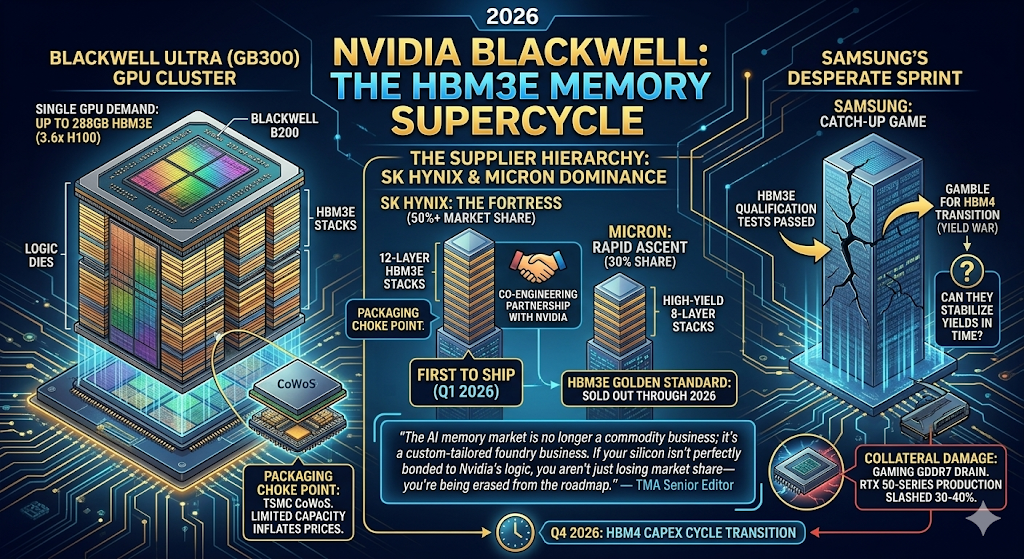

In 2026, the semiconductor industry has entered what I call the “Memory Supercycle.” The Blackwell B200 and the even more gluttonous Blackwell Ultra (GB300) have turned HBM3E into the world’s most precious commodity. As of Q1 2026, major suppliers like SK Hynix and Micron have already sold out their entire HBM production capacity through the end of the year.

The sheer scale is staggering. A single Blackwell Ultra GPU now demands up to 288GB of HBM3E—a 3.6x increase over the H100. This isn’t just a trend; it’s a structural transformation. Memory is no longer a peripheral; it is the critical bottleneck of the AI Factory. As noted in The AI Capex Threshold, if you can’t secure the memory, your billion-dollar GPU clusters are nothing more than expensive heaters.

The Supplier Hierarchy: SK Hynix’s Fortress and Samsung’s Sprint

The hierarchy of 2026 is brutally clear. SK Hynix maintains its “Fortress” status, holding over 50% of the HBM market thanks to its long-standing co-engineering partnership with NVIDIA. They are the first to ship the 12-layer HBM3E stacks that Blackwell Ultra craves.

Samsung, as I detailed in The HBM4 Yield War, is currently in a desperate “All-In” gamble. While they have finally passed qualification tests for HBM3E, they are playing a catch-up game. Their success in late 2026 hinges on whether they can stabilize yields for the upcoming HBM4 transition faster than the competition. The market has no mercy for “retesting” in the middle of a supercycle.

“The AI memory market is no longer a commodity business; it’s a custom-tailored foundry business. If your silicon isn’t perfectly bonded to Nvidia’s logic, you aren’t just losing market share—you’re being erased from the roadmap.” — TMA Senior Editor

TMA Fact Check 2026: The Bottleneck Realities

- The Packaging Choke Point: The limit isn’t just making the HBM; it’s the CoWoS (Chip-on-Wafer-on-Substrate) packaging. TSMC remains the only game in town for the massive 2.5D/3D integration Blackwell requires, creating a secondary bottleneck that keeps prices inflated.

- Gaming’s Collateral Damage: The HBM mania has drained the GDDR7 supply chain. As a result, NVIDIA has reportedly slashed RTX 50-series production by 30-40% in early 2026 to prioritize AI wafers.

- The HBM4 Transitional Shadow: Even as HBM3E reigns as the 2026 “Golden Standard,” the industry is already obsessed with HBM4. The transition is expected to trigger another massive Capex cycle by Q4 2026.

Related Deep Analysis

- The HBM4 Yield War: SK Hynix’s Lead vs. Samsung’s Desperate ‘All-In’ Gamble

- The AI Capex Threshold: The Cold Judgment of ROI in 2026

- The Backdoor Revolution: BSPDN and the Great Recalibration of the 2nm Foundry War

The Sharp Question

Are you still looking at “Memory” as a cyclical commodity, or do you recognize HBM3E as the high-margin “Energy” that powers the entire AI economy? In 2026, the spreadsheet that doesn’t account for HBM scarcity isn’t an analysis—it’s a work of fiction.

#NVIDIA Blackwell #HBM3E #SK Hynix #Micron #Samsung #AI Memory #Semiconductor Supply Chain #2026 Tech Macro