From my vantage point in Gimpo, looking out at the murky fog of the Han River, the metaphor for today’s information landscape is clear. We are drowning in a sea of synthetic reality. But as of January 2026, the fog is being forcibly cleared by the iron hand of regulation. The era where Big Tech could release “god-like” generative models without accountability has officially ended. Today, if an AI speaks, it must show its ID.

As the AI Basic Act and California’s SB 942 take full effect in 2026, AI watermarking has shifted from a voluntary pledge to a stringent legal mandate for Big Tech.

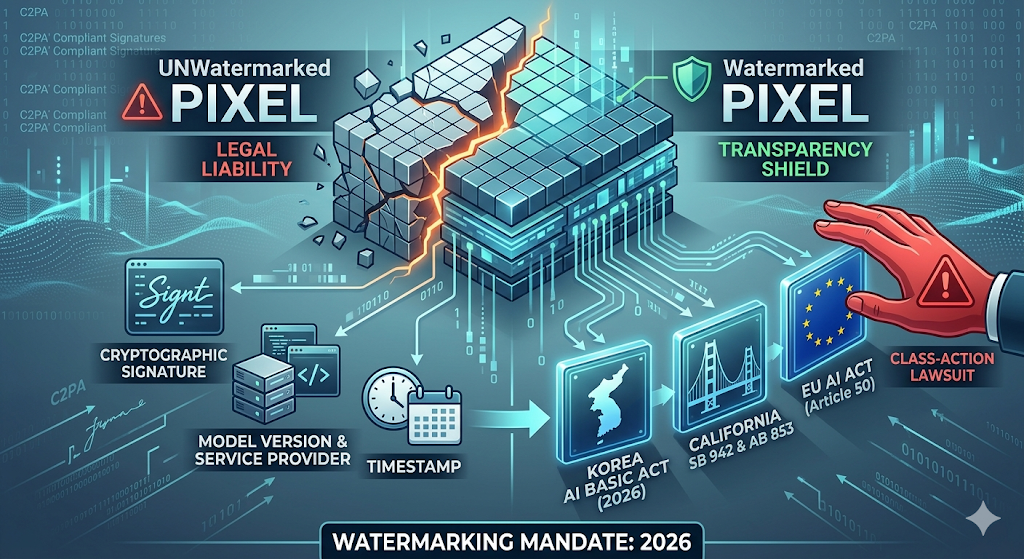

The Mandate: Watermarks as a Legal Necessity

In 2026, AI Watermarking is no longer a PR-friendly “safety feature.” It is a structural requirement of the 2026 AI Basic Act in Korea and California’s SB 942 (AI Transparency Act). These laws mandate that any AI system with over 1 million users must embed permanent, machine-readable “latent disclosures” into every image, video, and audio file generated.

This isn’t just about a visible “Made by AI” sticker. We are talking about deep-level metadata—cryptographically signed provenance that tracks the model version, the service provider, and the timestamp.

“In 2026, a pixel without a watermark is a legal liability. For Big Tech, transparency is the only shield against billion-dollar class-action lawsuits.” — TMA Senior Editor

TMA Fact Check: The 2026 Regulatory Teeth

- The AI Basic Act (Korea): Effective January 22, 2026, this law imposes strict labeling requirements for any AI output “difficult to distinguish from reality.” Failure to comply can lead to massive administrative fines and, more importantly, a direct hit to corporate ESG ratings.

- California’s SB 942 & AB 853: These twin pillars of transparency require large providers to provide free, public “detection tools.” By August 2026, the liability shifts: if a platform fails to detect and label AI content, they are as legally responsible for the misinformation as the person who prompted it.

- EU AI Act (Article 50): The August 2026 deadline for transparency obligations is the final nail in the coffin for anonymous synthetic media. The EU’s “Code of Practice” now demands multi-layered marking strategies, combining watermarks with C2PA-compliant digital signatures.

From Platform Immunity to Content Liability

For decades, Big Tech enjoyed the protection of “Safe Harbor” and Section 230-style immunity. 2026 has revoked that privilege for the AI era. When a deepfake causes financial panic or political unrest, the “Inference Provider” (the Big Tech firm whose API was used) is now being scrutinized for “Negligent Distribution.”

The focus has shifted from Input Risk (copyright) to Output Liability (harm). If your model didn’t secure its output with a robust, tamper-resistant watermark, you are now an accomplice in the eyes of the court.

Related Deep Analysis

- The ‘Free-Rider’ Prevention Act: A Trojan Horse Maiming the Digital Ecosystem

- EU AI Act Implementation and the Rise of Sovereign AI

- The Intimate Agent: Why On-Device Wellness is the Silent Guardian of the 2026 Household

The Sharp Question

As we build a global ‘Digital Identification’ system for every AI pixel, are we successfully fighting misinformation, or are we simply creating a massive surveillance apparatus for the provenance of every human thought?

#AI Watermark #Big Tech Liability #AI Basic Act #SB 942 #EU AI Act #Deepfake Regulation #Content Provenance #C2PA