As the $720B AI bet hits the power wall, ‘Energy Arbitrage’ becomes the only metric for ROI. Discover why raw compute is dead without cheap electrons.

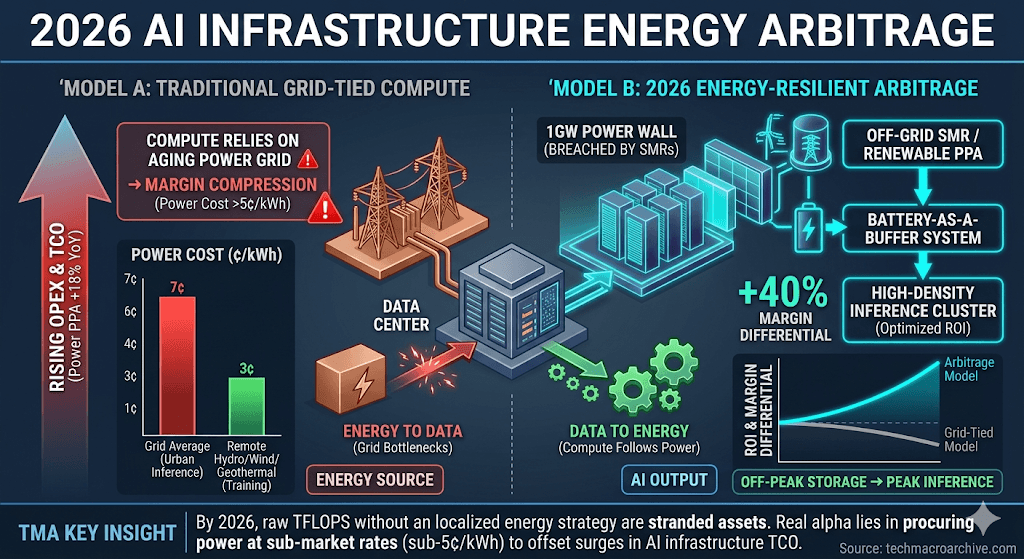

The $720 billion hardware gamble is currently colliding with the physical reality of a decaying global power grid, rendering raw TFLOPS meaningless without a localized energy strategy. While the market remains fixated on the delivery of Blackwell B200 units, the true alpha is shifting from silicon ownership to AI Infrastructure Energy Arbitrage—the ability to procure and store power at sub-market rates to offset the surging TCO of next-gen data centers.

[Executive Summary — Cold Truths]

- Conditional Viability: The AI rally sustains only if operators can secure sub-5 cent/kWh power contracts; anything higher triggers immediate margin compression.

- The Arbitrage Shift: Value is migrating from “Compute-rich” firms to “Energy-resilient” infrastructure players who can exploit grid latency.

- The Capex Trap: Massive investments in HBM4 and advanced logic will become stranded assets if the 1GW Power Wall is not breached by localized SMRs.

The Arbitrage Logic — Silicon vs. Electrons

The current market friction lies in the decoupling of compute supply and energy availability. We are seeing a divergence where high-performance compute is available, but the “fuel”—stable, high-density electricity—is geographically trapped or priced out of profitability. This is where AI Infrastructure Energy Arbitrage becomes the defining metric of the 2026 fiscal year.

True Energy Arbitrage in 2026 involves moving the data to the energy, not the energy to the data. This “Compute-follows-Power” model is the only hedge against the Capex Trap, where billion-dollar clusters sit idle due to grid curtailment. As hyperscalers scramble for North Dakota’s wind or Iceland’s geothermal, the arbitrage gap between “Grid-tied” and “Off-grid” compute is widening to a 40% margin differential.

“In 2026, a GPU without a dedicated power PPA is not an asset; it is a liability with a 3-year depreciation clock. Without AI Infrastructure Energy Arbitrage, your ROI is a statistical illusion.”

Latency Economics and the Grid Constraint

The friction intensifies when we consider Latency Economics. Training clusters can afford to be near remote hydro-dams, but inference requires proximity to urban grids—the very places where power prices are peaking. This creates a structural bottleneck that most “Sovereign AI” initiatives are physically unprepared to handle.

To master AI Infrastructure Energy Arbitrage, firms are now deploying “Battery-as-a-Buffer” systems. These systems allow data centers to draw power during off-peak hours and run high-density inference during peak demand, effectively decoupling the cost of intelligence from the real-time volatility of the grid.

The 2026 Energy-Compute Inversion

We are witnessing a historical inversion. Historically, tech firms bought power to run chips; now, energy firms are buying chips to “monetize” their surplus power. This AI Infrastructure Energy Arbitrage model is turning utility companies into the new “Shadow Hyperscalers.” If a utility company can run inference on its own curtailed renewable energy, its cost-to-serve is effectively zero, making it impossible for traditional cloud providers to compete on price.

[The Evidence]

- Bloomberg Energy Finance — Average data center power PPA prices have surged 18% YoY, outpacing the revenue growth of mid-tier AI SaaS providers.

- IEA Electricity Report — Global data center electricity consumption is projected to double by 2026, reaching a 945 TWh threshold that threatens national grid stability.

To fully grasp the 2026 supply chain recalibration, we recommend exploring these interconnected reports:

- [The $110 Oil Shock]: How fossil fuel volatility dictates the floor price of AI inference.

- [The Sovereign AI Mirage]: Why national pride cannot bypass the physical laws of thermodynamics and power distribution.

- [The 1GW Power Wall]: Analyzing the technical failure points of upcoming “Mega-Clusters.”

[The Sharp Question]

If the cost of an AI-generated answer exceeds the human cognitive cost due to energy premiums, does the “Compute Bubble” pop from the bottom up, or do we simply accept a world where intelligence is a luxury good reserved for the energy-rich? Is AI Infrastructure Energy Arbitrage the savior of the industry, or merely the last gasp of a model that ignored the physical limits of our planet?

AI Infrastructure, Energy Arbitrage, TCO Analysis, AI ROI 2026, Grid Constraints, SMR, Sovereign AI, Big Tech Capex, Inference Economics, Tech Macro 2026,