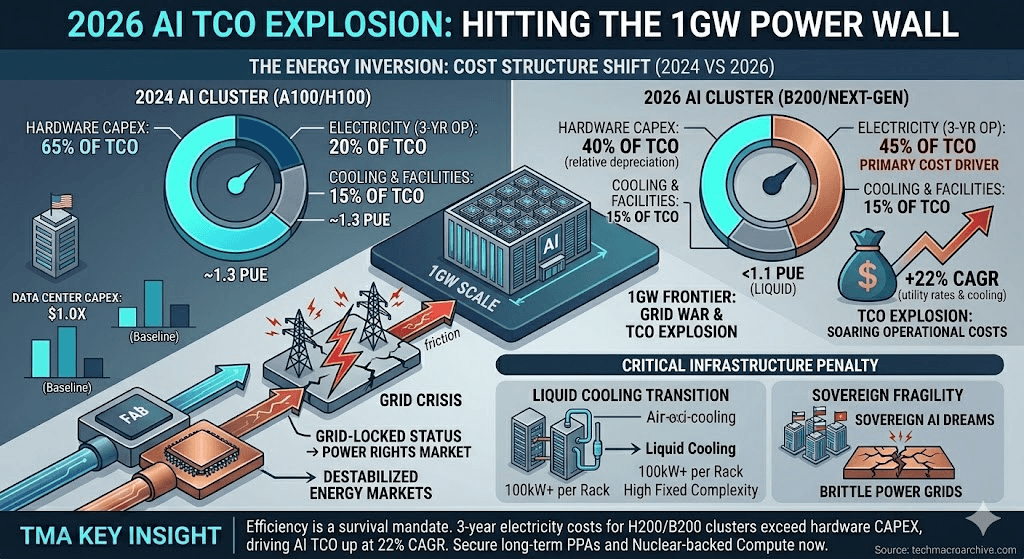

In 2026, AI scaling hits the 1GW Power Wall. Beyond GPU specs, the soaring TCO of energy is the silent killer of Sovereign AI dreams.

Stop obsessing over FLOPS. In 2026, the only metric that matters is Mega-Watts. The industry spent years bragging about model parameters while ignoring the power grid, and now the bill has arrived. We are hitting the 1GW Power Wall—a point where a single AI cluster consumes as much energy as a mid-sized city. This isn’t just an engineering hurdle; it is a financial guillotine. If your Sovereign AI strategy doesn’t account for the fact that energy costs now outweigh silicon depreciation, you aren’t building a future—you’re building an expensive furnace.

[Executive Summary]

- The Energy Inversion: For the first time, 3-year electricity costs for H200/B200 clusters exceed the initial hardware CAPEX.

- 1GW Frontier: The transition to Giga-Watt scale data centers is triggering a “Grid War” between industrial sectors and AI operators.

- TCO Explosion: Total Cost of Ownership is rising at a 22% CAGR, driven solely by utility rate hikes and cooling infrastructure.

- Sovereign Fragility: Nations promising “AI Autonomy” are realizing their power grids are too brittle to support the very clusters they subsidized.

[H2 Deep Analysis: The Thermodynamics of a Failed Investment]

What is the 1GW Power Wall?

The 1GW Power Wall is a critical infrastructure limit reached in 2026 where the energy demands of a single AI training cluster (exceeding 1 Gigawatt) surpass the available grid capacity of most urban Tier-1 data center hubs, forcing a radical decoupling of compute location from administrative centers.

In the 2026 Macro environment, the “Yield War” has shifted from the fab to the power plant. While we’ve optimized chip architecture, we cannot optimize the Second Law of Thermodynamics. A standard rack in 2026 now demands 100kW+, pushing traditional air-cooling to obsolescence and forcing a mandatory transition to liquid cooling—adding another layer to the TCO.

The AI Energy Inversion: Cost Structure Shift (2024 vs 2026)

| Cost Component | 2024 AI Cluster (A100/H100) | 2026 AI Cluster (B200/Next-Gen) | TCO Impact |

|---|---|---|---|

| Hardware CAPEX | 65% of TCO | 40% of TCO | Relative Depreciation |

| Electricity (3-Yr Op) | 20% of TCO | 45% of TCO | Primary Cost Driver |

| Cooling & Facilities | 15% of TCO | 15% of TCO | High Fixed Complexity |

| Energy Efficiency | ~1.3 PUE | <1.1 PUE (Liquid) | Infrastructure Penalty |

The irony is sharp: governments are desperate for Sovereign AI to ensure national security, yet they are creating national insecurity by destabilizing their own energy markets. In Korea and Europe, the “Grid-Locked” status of new data centers has led to a secondary market for “Power Rights,” where the right to draw 100MW is traded more aggressively than the chips themselves.

The Sovereign AI dream is hitting a hard physical limit: you can print money to buy GPUs, but you cannot print electrons.

[The Evidence]

- Bloomberg: The 2026 Global Grid Crisis: Investigation into how AI clusters are outstripping national grid upgrades.

- IEA Electricity 2026 Report: Global electricity demand from data centers expected to double by 2026.

- Reuters: The Hidden TCO of Liquid Cooling: Financial breakdown of the infrastructure pivot required for B200-class deployments.

[The Sharp Question]

When your AI model finally achieves “Sovereignty,” but the cost of keeping it awake bankrupts your local utility provider, who is truly in control: the state, or the power meter?

Strategic Takeaway for 2026: Efficiency is no longer a green initiative; it is a survival mandate. In the 1GW era, the winners won’t be those with the most GPUs, but those who secured Long-term Power Purchase Agreements (PPAs) and Nuclear-backed Compute before the grid went dark.

#DataCenterOPEX #1GW_Cluster #SovereignAI_Trap #ComputingThermodynamics #AI_Sustainability_Crisis #2026MacroStrategy